NEW NVIDIA Volta-Powered DGX-1: Supercomputer

22 May 2017

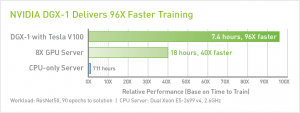

The new NVIDIA DGX-1 is similar to the previous generation offering based on Pascal, but is powered by eight Tesla V100s GPUs, linked together via next-gen NVIDIA NVLink interconnect technology that ups the bandwidth per GPU to 300GB/s.

The rest of the system consists of:

- Dual, 20-core Intel® Xeon® E5-2698 CPUs

- 512GB of RAM

- four 1.92TB SSDs in RAID 0

- a pair of 10GbE connections

There are a total of 40,960 CUDA cores (5,120 Tensor cores) in the system, with 128GB of total GPU memory, spread across those Tesla V100 processors. All told, the Volta-infused DGX-1 offers up to 960 TFLOPs of FP16 compute performance, versus 170 TFLOPs for the original, with significantly more bandwidth on tap.

SYSTEM SPECIFICATIONS

| Model | NVIDIA DGX-1 | |

|---|---|---|

| GPUs | 8X Tesla V100 | 8X Tesla P100 |

| TFLOPS (GPU FP16) | 960 | 170 |

| GPU Memory | 128 GB total system | |

| CPU | Dual 20-Core Intel® Xeon® E5-2698 v4 2.2 GHz | |

| NVIDIA CUDA® Cores | 40,960 | 28,672 |

| NVIDIA Tensor Cores (on V100 based systems) | 5,120 | N/A |

| Maximum Power Requirements | 3,200 W | |

| System Memory | 512 GB 2,133 MHz DDR4 LRDIMM | |

| Storage | 4X 1.92 TB SSD RAID 0 | |

| Network | Dual 10 GbE, Up to 4 IB EDR | |

| Software | Ubuntu Linux Host OS See Software Stack for Details*** |

|

| System Weight | 134 lbs | |

| System Dimensions | 866 D x 444 W x 131 H (mm) | |

| Packing Dimensions | 1,180 D x 730 W x 284 H (mm) | |

| Operating Temperature Range | 10–35 °C | |

The new NVIDIA DGX-1 will be available at XENON. Customers who buy one today, can receive the Pascal-based version, and have the Tesla P100s swapped for V100s when they become available.

Click on the thumbnail to view the PDF.

Click on the thumbnail to view the PDF.