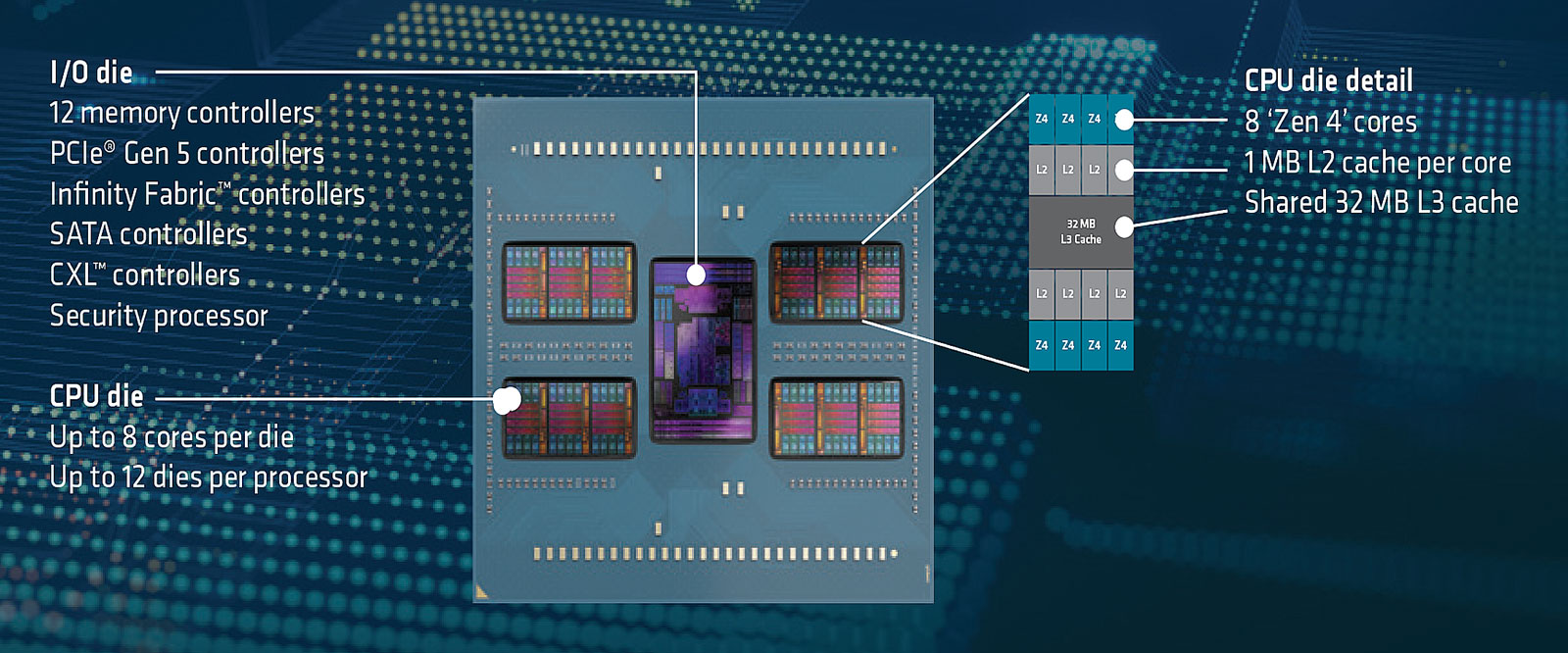

AMD EPYC Fourth Generation CPUs

02 Dec 2022

AMD has released details of the new EPYC Fourth Generation data centre CPUs (“Genoa” series). We’ve had a chance to have a detailed look under the hood with AMD, and have the highlights of the updates for HPC/AI and data intensive workloads.

More Cores, More Performance

The new CPUs take the core count to 96 from the previous maximum of 64. The increase in core count as well the higher efficiency of this new processor generation provides a more than 2x increase in integer and floating point performance compared to the previous generation to accelerate a range of data intensive workloads.

The new CPUs take the core count to 96 from the previous maximum of 64. The increase in core count as well the higher efficiency of this new processor generation provides a more than 2x increase in integer and floating point performance compared to the previous generation to accelerate a range of data intensive workloads.

The jump in core counts and performance provides upgrade opportunities with the ability to replace a large number of legacy servers with less than half the number of EPYC based servers. This consolidation will save colo costs, and reduce power consumption in the data centre.

Faster Memory

The new EPYC architecture supports DDR5-4800 memory (up to 6TB) as well as PCIe Generation 5. In two socket server form, the EPYC CPUs will support up to 160 lanes of PCIe Gen5 connectivity.

There is also a maximum L3 cache of up to 384 MB.

CXL Support

The EPYC CPUs support the new CXL cache coherent link between CPU and other devices like accelerators, smart NICs, and memory devices. With a number of memory specialists like MemVerge developing innovative solutions for CXL, this will be an interesting space to watch.

More Power needs More Power!

Power draw on the 3rd generation EPYC was 155-280W. The higher core counts sees the TPD increase to 200-400W. Managing this power draw, and associate heat, will be key in designing systems and HPC clusters to maximise the performance and longevity of solutions built on the new 4th Generation EPYC.

AMD EPYC 9004 Series processor overview

AMD EPYC 9004 Series processor overviewDownload the AMD EPYC Processor Architecture White Paper Here

Design Your System Now

XENON delivers bespoke servers and high performance computing clusters with AMD CPUs, and we look forward to discussing your data and application requirements to build the best new system for your needs.

Talk to a Solutions Architect