Why NVIDIA GTC 2026 Matters: XENON’s Take on NVIDIA, AI Factories and What Comes Next

31 Mar 2026

From promise to production

Dr Werner Scholz,

CTO and Head of R&D

At the NVIDIA GTC 2026 conference, one message came through loud and clear: AI is moving from promise to production.

For Dr Werner Scholz, CTO and Head of R&D at XENON Systems, one of the most compelling signals was not just the scale of NVIDIA‘s roadmap, but how clearly the company is now organising around the real-world deployment of AI.

A strong example is NVIDIA DGX Spark™ pre-installed with NemoClaw. It points to a future where powerful AI infrastructure is not only faster and more capable, but also easier to adopt, secure and operationalise. That matters because, for many organisations, the challenge is no longer understanding AI’s potential. It is getting trusted platforms into production quickly, with the right software stack already in place.

A shift in how AI is built and deployed

This year’s keynote also reinforced just how quickly the market is evolving.

This year’s keynote also reinforced just how quickly the market is evolving.

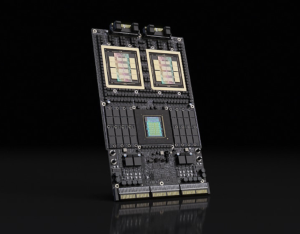

NVIDIA’s business is now centred around three major platforms: CUDA-X, AI factories and AI supercomputing systems.

- CUDA-X reflects two decades of investment in software and the developer ecosystem.

- AI factories represent the next phase, where compute, networking, storage and software are brought together as a purpose-built engine for AI outcomes.

- AI supercomputing systems such as DGX, HGX™, MGX™ and rack-scale platforms provide the underlying infrastructure powering those factories.

That shift is significant. For years, much of the conversation around AI infrastructure focused on training ever-larger foundation models. That work remains essential. However, the industry is now entering a new phase where inference is becoming just as important and, in many cases, more commercially immediate.

From training to inference

In simple terms, training teaches a model how to think. Inference is what happens when that model is used in the real world, whether for copilots, search, automation, simulation or agentic AI.

As more organisations move from experimentation to deployment, demand is rising for infrastructure designed not only to build AI models, but to run them efficiently, securely and at scale.

That was one of Dr Scholz’s key takeaways from the keynote. NVIDIA is not just building chips. It is building the foundations for a new generation of AI-enabled systems and services.

The roadmap: Rubin and beyond

Another major theme was the company’s roadmap.

Another major theme was the company’s roadmap.

The Vera Rubin platform is now moving into full chip production, with rack-scale systems like the Vera Rubin NVL72 — unifying 72 Rubin GPUs and 36 Vera CPUs — becoming available later this year, while Rubin Ultra is progressing through testing and will be available soon thereafter. Looking ahead, NVIDIA also previewed the next generation, Feynman, which will introduce advances across CPUs, GPUs, NVLink™, networking, BlueField and co-packaged optics.

For a general audience, the detail can sound abstract. However, the practical implication is straightforward: AI infrastructure is becoming more integrated, more specialised and more ambitious with every generation. Innovation is no longer happening in isolated components. It is happening across the entire stack, making AI token production cheaper at scale to make the wider demands for AI easier to meet.

Why data movement now matters as much as compute

Dr Scholz also highlighted the growing importance of storage and data movement. As AI systems become more capable, getting data into the right place at the right speed becomes just as important as raw processing power.

That is why developments in storage platforms, networking and domain-scale GPU connectivity matter so much. The future of AI performance will depend on how well the entire system works together.

When infrastructure becomes a physical challenge

One of the more thought-provoking moments in the keynote was NVIDIA’s discussion of data centres in space.

While it may sound futuristic, it highlights a very real engineering challenge: cooling. On Earth, systems can reject heat through mechanisms such as conduction and convection. In space, heat can only be radiated away, fundamentally changing the design equation.

For Dr Scholz, moments like that are a reminder that the AI industry is now operating at a scale where infrastructure challenges are as much physical as they are digital.

Power, cooling, density, networking and deployability are no longer secondary considerations. They are central to the future of AI.

Bridging innovation and real-world adoption

This is also why platforms such as OpenClaw and NemoClaw stand out.

This is also why platforms such as OpenClaw and NemoClaw stand out.

Secure, enterprise-ready agentic platforms are not just interesting because they are new. They are important because they help bridge the gap between breakthrough technology and practical adoption. Organisations want innovation, but they also need trust, control and a clear path into production.

NemoClaw in particular deserves attention: it is an open-source stack that installs in a single command, adding NVIDIA OpenShell security and privacy guardrails to the OpenClaw agent platform. It runs on dedicated local hardware — including NVIDIA DGX Spark and NVIDIA DGX Station™ — or scales into cloud and AI factory environments, giving organisations a practical path to deploy always-on AI agents with defined permission and privacy controls.

A milestone moment for XENON

For XENON Systems, GTC 2026 reinforced a view the team has held for some time: the winners in the next phase of AI will not be defined by hardware alone. They will be defined by how effectively hardware, software, storage, networking and operational readiness come together.

It was also a fitting moment for XENON in the region. XENON has been recognised as NVIDIA Enterprise Partner of the Year in ANZ for the third year in a row, reflecting the company’s continued focus on delivering world-class accelerated computing and AI infrastructure to customers across Australia and New Zealand.

The AI era is now operational

From AI factories to secure agentic platforms, from next-generation systems to the physical realities of operating them at scale, GTC 2026 made one thing clear: the AI era is no longer coming. It is here, and it is becoming operational.

Images supplied by NVIDIA.