Global Universities Fast Tracking Their Research

17 Mar 2022

Five Universities fast-tracking their research IT and AI capabilities with NVIDIA DGX Systems

The World’s First Portfolio of Purpose-built AI Supercomputers is Ready to Supercharge Discovery, Right Out of the Box

IT systems for higher-education and research have never been more critical. Modern computer systems must be ever more powerful to enable the future-shaping work of faculty, researchers and students.

Universities are the beating heart of innovation, advancing the application of human knowledge in research and discovery. As artificial intelligence (AI) changes virtually every industry, institutions offering superior compute power are attracting the best and brightest undergrad and postgraduate students.

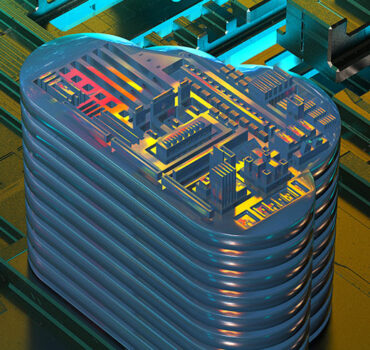

Inspired by the demands of deep learning and data analytics, NVIDIA DGX™ systems are fully integrated solutions combining the world’s most powerful GPU technology, with innovative, GPU-optimised software and simplified management, for groundbreaking performance and results. NVIDIA DGX systems give data scientists and researchers the fastest start in artificial intelligence, enabling AI exploration across desk, data centre, and cloud. NVIDIA DGX systems are a super-computing solution that works right out of the box, unlocking insights in hours instead of weeks or months.

XENON has a quarter-century of experience delivering high performance computing solutions in Australia and is the only NVIDIA Elite Partner in the region accredited across all NVIDIA technologies, and the preferred higher-education specialist for NVIDIA DGX systems. With deep expertise in AI compute solutions, XENON is equipped to plan and install the NVIDIA DGX A100 and put any institution on a fast track to AI excellence.

NVIDIA has forged close relationships with many of the world’s most respected universities and research institutions. These partnerships enable NVIDIA to collaborate with leading researchers, and continue to build connections with research facilities working to solve the world’s most complex scientific challenges. XENON is a natural partner for NVIDIA in this area, with a long history of providing leading edge solutions to the largest research organisations in Australian, New Zealand and across APAC – including CSIRO, NCI, Pawsey, as well as the leading universities and health research institutes.

The five universities and research institutes profiled below all use NVIDIA DGX systems, showing how broad the application of data science and AI is today. As it grows exponentially, institutions who don’t prioritise their compute resources are set to be left behind.

Using Social Media for Good at Germany’s Deep Learning Competence Center (DFKI)

As climate change advances, natural disasters are increasingly common, causing major destruction to life, property and economies. Germany’s Deep Learning Competence Centre (DFKI) is using NVIDIA DGX system to create DeepEye, an innovative crisis-management tool that harnesses social media outputs for positive outcomes.

As climate change advances, natural disasters are increasingly common, causing major destruction to life, property and economies. Germany’s Deep Learning Competence Centre (DFKI) is using NVIDIA DGX system to create DeepEye, an innovative crisis-management tool that harnesses social media outputs for positive outcomes.

Using a variety of AI methods, including Convolutional Neural Networks, DeepEye takes satellite images and enriches them with social media content from the disaster area. AI enables the extraction of contextual aspects to assemble a comprehensive view of the event.

Powerful NVIDIA graphics processing units (GPUs) enable the training of complex neural networks with large amounts of data. With increased GPU memory and fully connected GPUs based on the NVSwitch™ architecture, DFKI can build bigger models and process more data to aid rescuers in their decision making for faster, more efficient dispatching of resources in a crisis.

Creating Beautiful Music with AI at Monash University

Music plays an impactful role in the experience of interactive media, but it’s challenging for composers to produce music for dynamic, user driven situations.

Monash University has long been an advocate for GPU computing technologies and turned to NVIDIA to create SensiLab, a $5 million state-of-the-art research lab and the only one in Australia with a dedicated NVIDIA DGX supercomputer for creative AI and visualisation.

Researchers at Monash University’s SensiLab used the NVIDIA DGX system to develop a new deep learning framework for adaptively scoring interactive media that’s been successfully implemented in two video games.

Accelerating the Diagnosis of COVID-19 Severity at the Martinos Center

Around the world researchers have been racing to develop AI tools to battle COVID-19, including at Mass General Hospital in Brigham, Massachusetts, USA. There, the Martinos Center for Biomedical Imaging has used NVIDIA DGX A100 accelerate its AI work.

Around the world researchers have been racing to develop AI tools to battle COVID-19, including at Mass General Hospital in Brigham, Massachusetts, USA. There, the Martinos Center for Biomedical Imaging has used NVIDIA DGX A100 accelerate its AI work.

Researchers developed models to segment and align multiple chest scans, calculate lung-disease severity from X-ray images, and combine radiology data with other clinical variables to predict outcomes in COVID patients.

Built and tested using data from Mass General Brigham hospital, these models, once validated, could be used beyond the pandemic to bring radiology insights closer to the clinicians tracking patient progress and making treatment decisions. “Using deep learning, we developed an algorithm to extract a lung-disease severity score from chest X-rays that’s reproducible and scalable,” says Matthew D. Li, a radiology resident at Mass General.

The Martinos Center uses a variety of NVIDIA DGX systems to accelerate its research, and last year installed NVIDIA DGX A100 systems, each built with eight NVIDIA A100 Tensor Core GPUs and delivering 5 petaflops of AI performance.

Director of the Martinos Center’s Laboratory for Computational Neuroimaging Bruce Fischl says NVIDIA DGX systems help Martinos Center researchers iterate more quickly, experimenting with different ways to improve their AI algorithms. DGX A100, with NVIDIA A100 GPUs based on the NVIDIA Ampere architecture, will further speed the team’s work with third-generation Tensor Core technology.

“Quantitative differences make a qualitative difference,” says Fischl. “I can imagine five ways to improve our algorithm, each of which would take seven hours of training. If I can turn those seven hours into just an hour, it makes the development cycle so much more efficient.”

The Martinos Center will use NVIDIA Mellanox switches and VAST Data storage infrastructure, enabling its developers to use NVIDIA GPU Direct technology to bypass the CPU and move data directly into or out of GPU memory, achieving better performance and faster AI training.

Simulating the planet to predict natural disasters at JAMSTEC

The Japan Agency for Marine-Earth Science and Technology, or JAMSTEC, has built the fourth generation of its Earth Simulator, based around SX-Aurora TSUBASA vector processors from NEC and NVIDIA A100 Tensor Core GPUs, all connected with NVIDIA Mellanox HDR 200Gb/s InfiniBand networking. The Earth Simulator has a maximum theoretical performance of 19.5 petaflops, putting it in the highest echelons of the TOP500 supercomputer ratings.

The Japan Agency for Marine-Earth Science and Technology, or JAMSTEC, has built the fourth generation of its Earth Simulator, based around SX-Aurora TSUBASA vector processors from NEC and NVIDIA A100 Tensor Core GPUs, all connected with NVIDIA Mellanox HDR 200Gb/s InfiniBand networking. The Earth Simulator has a maximum theoretical performance of 19.5 petaflops, putting it in the highest echelons of the TOP500 supercomputer ratings.

Its work will span marine resources, earthquakes and volcanic activity. Scientists will gain deeper insights into cause-and-effect relationships in areas such as crustal movement and earthquakes. The Earth Simulator will be deployed to predict and mitigate natural disasters, potentially minimising loss of life and damage in the event of another natural disaster like the earthquake and tsunami that hit Japan in 2011.

Earth Simulator will achieve this by running large-scale simulations at high speed in ways previous generations of Earth Simulators couldn’t. The intent is also to have the system play a role in helping governments develop a sustainable socio-economic system.

The new Earth Simulator promises to deliver a multitude of vital environmental information. The fourth-generation model will deliver more than 15x the performance of its predecessor, while keeping the same level of power consumption and requiring around half the footprint. It’s able to achieve these feats thanks to major research and development efforts from NVIDIA and NEC.

The latest processing developments are also integral to the Earth Simulator’s ability to keep up with rising data levels. Scientific applications used for earth and climate modelling are generating increasing amounts of data that require the most advanced computing and network acceleration to give researchers the power they need to simulate and predict our world.

NVIDIA Mellanox HDR 200Gb/s InfiniBand networking with in-network compute acceleration engines combined with NVIDIA A100 Tensor Core GPUs and NEC SX-Aurora TSUBASA provides JAMSTEC a world-leading marine research platform critical for expanding earth and climate science and accelerating discoveries.

Teaching robots to learn at UC Berkeley

Living creatures learn about their physical environment by poking at things, pushing things and observing what happens. This could be the key to robotic intelligence, too. It’s called reinforcement learning and at Professor Sergey Levine’s Robotic Artificial Intelligence and Learning Lab at UC Berkeley, it’s powered by NVIDIA GPUs and a NVIDIA DGX system. Levine says this GPU compute power brings two significant benefits to AI: First, it speeds up training and “allows us to do science faster”. Second, inference on GPUs allows for real-time response, which is “a really big deal for robots”.

Living creatures learn about their physical environment by poking at things, pushing things and observing what happens. This could be the key to robotic intelligence, too. It’s called reinforcement learning and at Professor Sergey Levine’s Robotic Artificial Intelligence and Learning Lab at UC Berkeley, it’s powered by NVIDIA GPUs and a NVIDIA DGX system. Levine says this GPU compute power brings two significant benefits to AI: First, it speeds up training and “allows us to do science faster”. Second, inference on GPUs allows for real-time response, which is “a really big deal for robots”.

XENON – Enabling Researchers to Do Great New Things

For over 25 years XENON has been delivering innovative solutions for universities and research teams looking to iterate faster, collaborate more, and develop solutions for tomorrow’s problems today.

Get in touch with the XENON team to explore solutions for your IT challenges, and learn how to fast track your IT capabilities and deliver more capability for your research teams.

Get a Quote