NVIDIA Data Centre GPUs

NVIDIA® Data Centre GPUs bring the latest parallel GPU processing to a range of applications – from data science, to research, artificial intelligence, machine learning and more. XENON can design a server with proper power, cooling and memory to power single or multiple GPUs. XENON also builds workstation solutions with these GPUs – unleashing the power of GPU computing into a desktop form factor, at home in ambient room temperatures and with standard power supplied. Contact the XENON solutions team to discover which NVIDIA GPU is right for your requirements.

For a limited time, a four hour, self-paced course – AI in the Data Centre – is available for up to 3 team members with each NVIDIA Data Centre GPU purchased. Spaces are limited. Contact us to learn more.

Download a summary of the NVIDIA Data Center GPU Platform here.

NVIDIA L40S GPU

NVIDIA L40S GPU

The NVIDIA L40S GPU is the most powerful universal GPU for the data centre, delivering end-to-end acceleration for the next generation of AI-enabled applications—from generative AI and model training and inference to 3D graphics, rendering, and video applications.

- NVIDIA Ada Lovelace Architecture

- GPU Memory 48GB GDDR6 with ECC

- 864GB/s GPU memory bandwidth

- Includes fourth-generation Tensor Cores and an FP8 Transformer Engine, delivering over 1.45 petaflops of tensor processing power

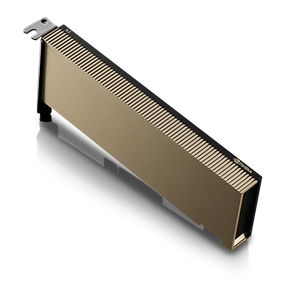

NVIDIA L4 GPU

NVIDIA L4 GPU

The NVIDIA L4 Tensor Core GPU delivers a versatile platform to accelerate Deep Learning, Graphics and Video processing applications in the Cloud and at the Edge.

GPU Architecture NVIDIA Ada Lovelace Architecture

.

- NVIDIA Ada Lovelace Architecture

- Featuring 24 GB of GDDR6 memory, x16 PCIe Gen4 connectivity at a 72 W maximum power envelope

- 300 GB/s GPU memory bandwidth

- Equipped with L4 enable up to 120X higher AI Video performance

NVIDIA L40 GPU

NVIDIA L40 GPU

The NVIDIA L40 brings the highest level of power and performance for visual computing workloads in the data center.

- NVIDIA Ada Lovelace Architecture

- 48 GB GDDR6 with ECC

- Fourth-generation Tensor Cores

- NVIDIA vPC/vApps, NVIDIA RTX Virtual Workstation (vWS)

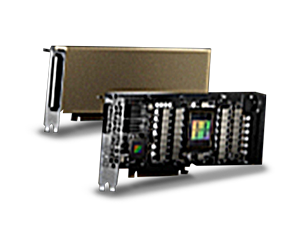

NVIDIA H100 Tensor Core GPU

NVIDIA H100 Tensor Core GPU

The NVIDIA H100 Tensor Core GPU powered by the NVIDIA Hopper GPU architecture delivers the next massive leap in accelerated computing performance for NVIDIA’s data center platforms.

- A new Transformer Engine enables H100 to deliver up to 9x faster AI training and up to 30x faster AI inference speedups on large language models compared to the prior generation A100

- Combination of fourth-generation NVlink offers 900 gigabytes per second (GB/s) of GPU-to-GPU interconnect

- Delivers 60 teraFLOPS of FP64 computing for HPC

- Delivers 3 terabytes per second (TB/s) of memory bandwidth per GPU and scalability with NVLink and NVSwitch

NVIDIA A16 Tensor Core GPU

NVIDIA A16 Tensor Core GPU

Unlock an unprecedented VDI user experience.

- 4x 16GB GDDR6 with error-correcting code (ECC)

- GPU memory bandwidth of 4x 232GB/s

- More than 2x the Encoder Throughput

- Supports multiple, high-resolution monitors (up to two 4K or a single 5K)

NVIDIA A10 Tensor Core GPU

NVIDIA A10 Tensor Core GPU

Accelerated graphics and video with AI for mainstream enterprise servers.

- Ultra-fast GDDR6 memory, delivering 600 GB/s of bandwidth

- 24GB GDDR6 GPU memory

- Compact, single-slot, 150W GPU

- Tensor Float 32 (TF32) precision provides up to 5X the training throughput

NVIDIA A2 Tensor Core GPU

NVIDIA A2 Tensor Core GPU

NVIDIA A2’s versatility, compact size, and low power exceed the demands for edge deployments at scale, instantly upgrading existing entry level CPU servers to handle inference.

- Features a low-profile PCIe Gen4 card and a low 40-60W configurable thermal design power (TDP) capability

- Features 16 GB of GDDR6 memory

- Supports x8 PCIe Gen4 connectivity

- Powered by the NVIDIA Ampere Architecture